The university class from 2012 that's saving me from bad stats and viral panic

Content on the Internet is full of what I call Shaky Stats.

These numbers look great and feel convenient. And they’re the basis for so many viral pieces and popular narratives. It’s a vicious cycle. See popular stat mentioned by authoritative thought leader or author. You (and other people) think it’s credible. It gets mentioned more by the press and other people on the internet.

But these stats are like a paper tiger – poke them a little and they’ll start to fall apart.

It starts from having that bullshit detector instinct — feeling like something is funny about this number and not taking things at face value. Which comes in handy when you interact with anything on the Internet — whether it's an article, watching a video, or interacting with any sort of AI tools.

I’ve been fascinated by digital literacy and close reading before I knew what to call them.

I like to put this up to my Research Methods module I studied as part of my degree in university. (Honest to God — this is the only remnant of my formal university education that I still remember clearly after so many years (the rest I think I've given back to my school, oops, sorry.)

It was a module in the first year of university. We covered statistics and different types of research methods: reading case studies, evaluating journals, assessing reliability, and sampling data. In it, we learned the core skill of looking between the lines and asking ourselves if something could be trusted. Every source has its limitations and biases - so in that light, how much weight should we give a study or author?

Yep, it sounds boring and academic. But looking back, it was my favourite module in university, or at least the one with the most staying power considering what I'm doing now.

I was always interested in asking why something was the way it was. Fun fact, one of my first (now defunct) blog titles was Why We Like That? This way way back in 2012 - when I was inspired by all the Dan Ariely books I was reading about behaviour psychology. ‘

Yup, I’m a nerd, and quite proud of it lol.

I most enjoyed poring over dusty historical accounts of World War 2 and discussions around what a line meant in a Langston Hughes poem, or why he used an em dash over a comma and how it affected the reader's perception of the text. I think that's when I knew that I loved trying to figure out what the 'so what' was, and whether we came to an agreement in the discussion wasn't the point. It was the healthy exchange of ideas that expanded everyone's impression and appreciation of the work.

I didn't know it yet, but that was the same instinct I'd be applying to research, evaluating client briefs, and viral LinkedIn posts a decade later. In short, it's asking "but why tho". Here’s how I apply these instincts as I move through my day on the Internet - first in researching a stat for a piece of client work, the other on how it keeps me grounded on emotionally charged social media platforms.

Case study #1: Investigating that 10,000 ads a day claim

Let's put my uni research studies skills to the test with this popular claim: we apparently see up to 10,000 ads a day. If you’re also in marketing, you've probably seen this cited in content about ad fatigue.

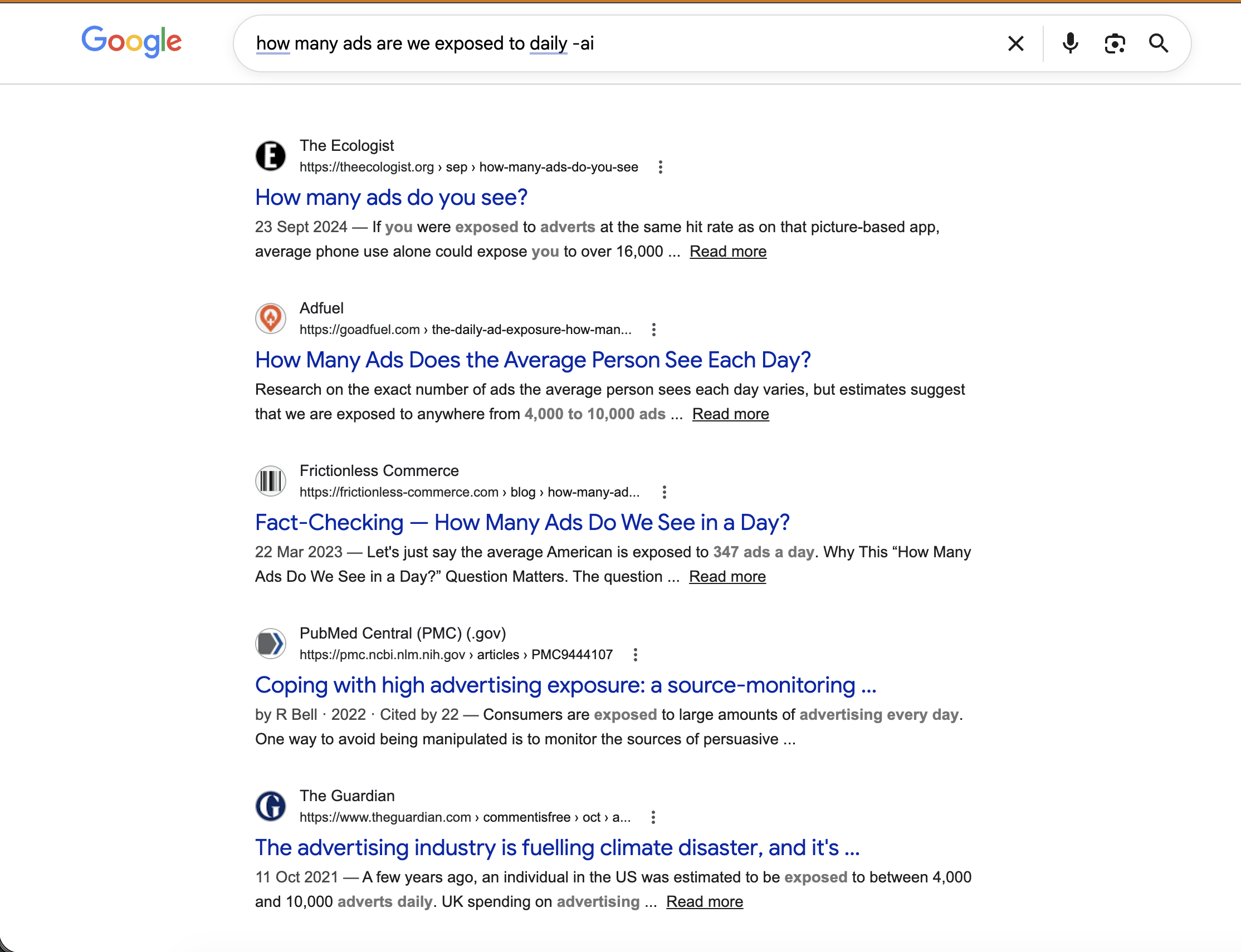

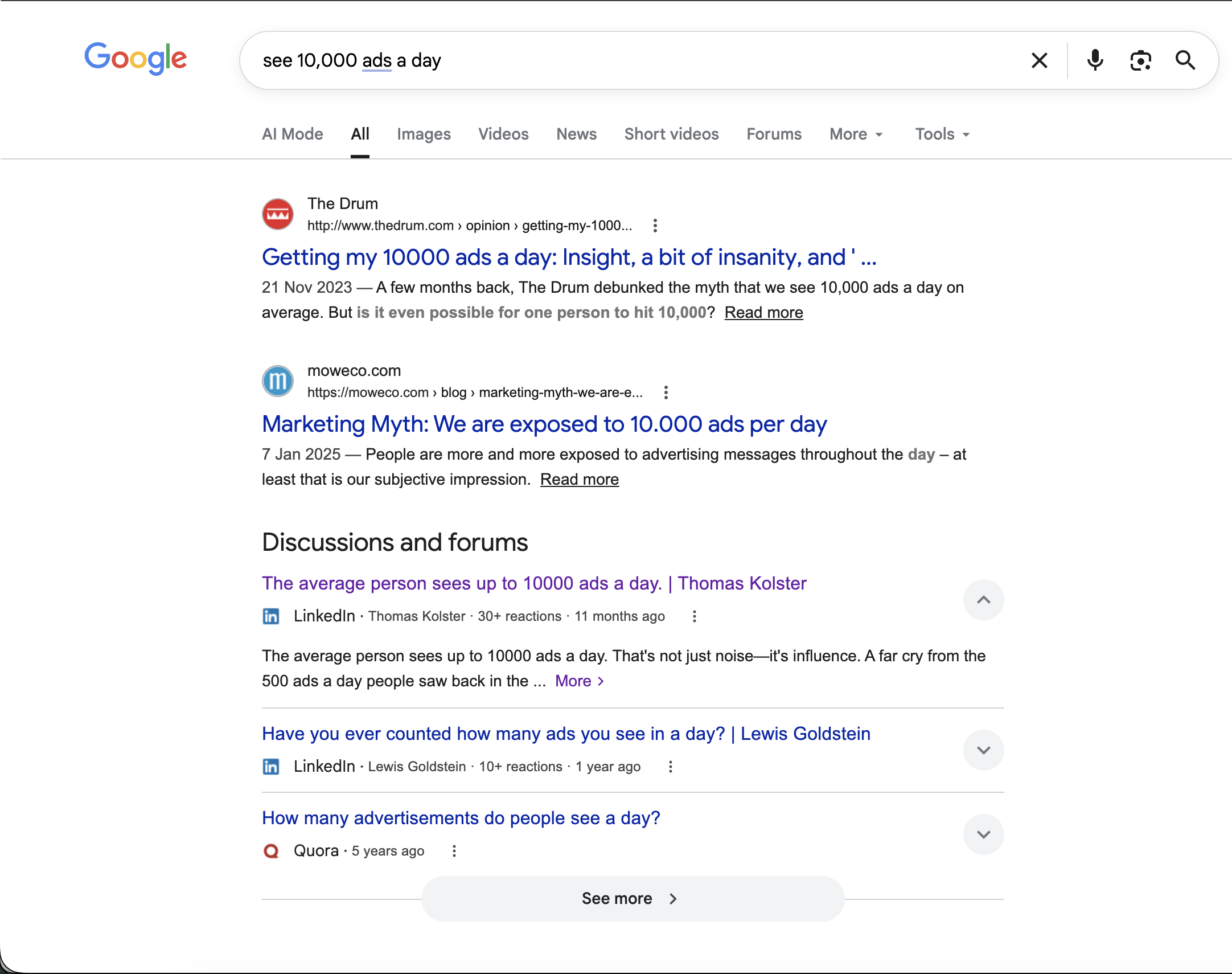

What’s on the search results when you do a quick Google search

Notice how this stat becomes a starting point. for discussions on social media related to this stat too?

First, let’s do some simple math.

10,000 ads a day = about 625 ads every waking hour.But, do you really see 625 ads every hour?I get the appeal. 10,000 is a nice, round number. It's massive, and easy to remember. But I don’t buy it. It feels… too convenient.

Yet, it's a stat that's referenced and parroted everywhere in your online content.It's so easy to go with the consensus of the masses: "Since so many people use this number, it's correct, right?"

Dig a little deeper, and these stats start to wobble.

For instance, 10,000 ads only hint at ads that people are shown, but it doesn’t tell you how many people actually remember. And people are pretty good nowadays at blocking out ads. So let’s see if we can find anything that acts as a contrary, at least that tells you whether a stat is safe to cite, or if you should cite it with caution.

A 2023 Marketing Brew report citing Provoke Insights found that 41% of respondents — surveyed among 1,500 Americans ages 21-65 — remembered only 1%-10% of the ads they'd seen in the past 24 hours.

Sounds good so far. But hang on, this study is from 2023, which is a little dated in 2026. So, let’s see if we can find more recent studies to investigate this using some good ol detective work.

So I kept digging. A 2025 study by consumer insights platform Zappi found that many respondents could not remember what brand was being advertised — naturally, defeating the ad's purpose. The sheer volume of ads, they noted, can overwhelm consumers.

Put these two together and you start seeing holes in the 10,000 figure. People might be exposed to that many ads, but most of it doesn't register. Those are two very different claims.

Two studies, a bit of digging, and now you know what you're citing. That's the difference between sounding informed and looking like you just copied the first stat you saw.

Case study #2: Stress-testing trending social media content

This instinct came in handy again when I came across another viral post making the rounds on my LinkedIn feed. In it, and it's from a well-followed content marketing voice on LinkedIn, they write about how technology companies will 100% automate content creation, and it will be good enough to be extremely difficult to tell apart from human-written content.

But, let's take a look at what they are -really- saying, and break it down. To stress test this claim, I asked myself two questions. First, is this post even meant for me?

Looking at the post, the author is making a prediction about where content is heading. OK, fair. But the framing is designed to create anxiety, and anxiety lands hardest on people still working out where they stand on AI or how much you understand how the tools work and their limitations. If you've spent real time testing these tools and building with them, the threat doesn't hit the same way.

Second, does the post earn its alarmist tone? I had to slow down here, as there’s a real point in this alarmist language. But the first thing that hits you is the emotional impact. That’s how posts go viral, after all.

I’ll be clear, it’s not wrong to appeal to emotion to get your posts seen. But it also means you have to separate what you're feeling from what's actually being claimed before you decide what to do with it.

I get why this deliberate research process feels like a lot of effort. You can't stress-test every piece of content you scroll past. But for posts that trigger that low-level anxiety, the ones that make you quietly wonder if the skills you've built up over a decade are about to be obsolete, a few minutes of deliberate thinking is worth it.

It keeps me grounded. And it gives me something to do with the uncertainty, rather than just sitting in it.

—

I nearly didn't pay attention in that module. I was in my first year of university, with my mind more interested in meeting people in our new dorms, or adapting to a new way of life in a country halfway across the world from Singapore. Plus, who likes reviewing research? It was so dry, and it didn't feel urgent at the time.

Well, jokes on me I guess.

It's the only thing from that period of my life that shows up in my work every single week, and has shaped how I view everything on the Internet (or even in person). It's a module and style of study that's gone beyond theory, and sharpened my bullshit detector instinct.

Turns out, the most useful thing I took from university was a way of thinking critically about information. It was a habit of asking whether something actually holds up. That habit has become harder to switch off with every year I spend online, both as a creator and consumer of information.

—

Information overload was already a problem before AI showed up

Too much noise arriving faster than anyone could do anything useful with it.

AI hasn't created that problem. It's just made it more obvious.

Which means the differentiating factor is what you actually do with that information, and how tight your bullshit detectors are. Not just knowing something is off, but being able to poke at it, name what's wobbly, and decide how much weight to give it.

I’m thankful I paid attention to that research methods class over a decade ago, and hopefully, these examples help teach you how to think about a stat or perspective that’s put in front of you, even as we prepare our brains to sift through more information and separate the trustworthy sources from the clickbait.

–

This is also how I help my clients succeed with their online research and content today.

Your brand’s content needs to build trust, and making sure you are able to correctly sift through information and build your arguments on firm foundations is one way you can do so.

Keen to work with me? Book a discovery call here.